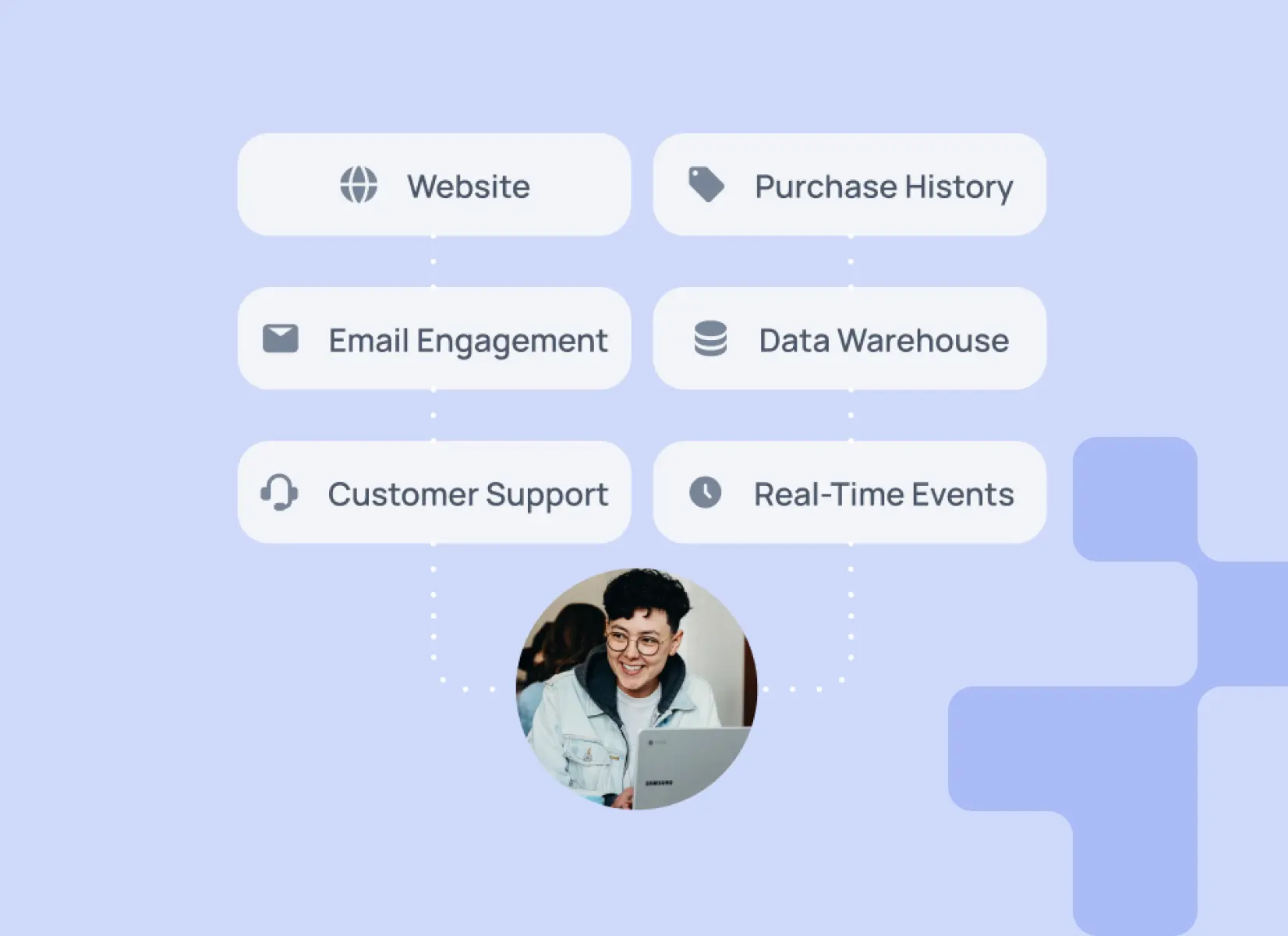

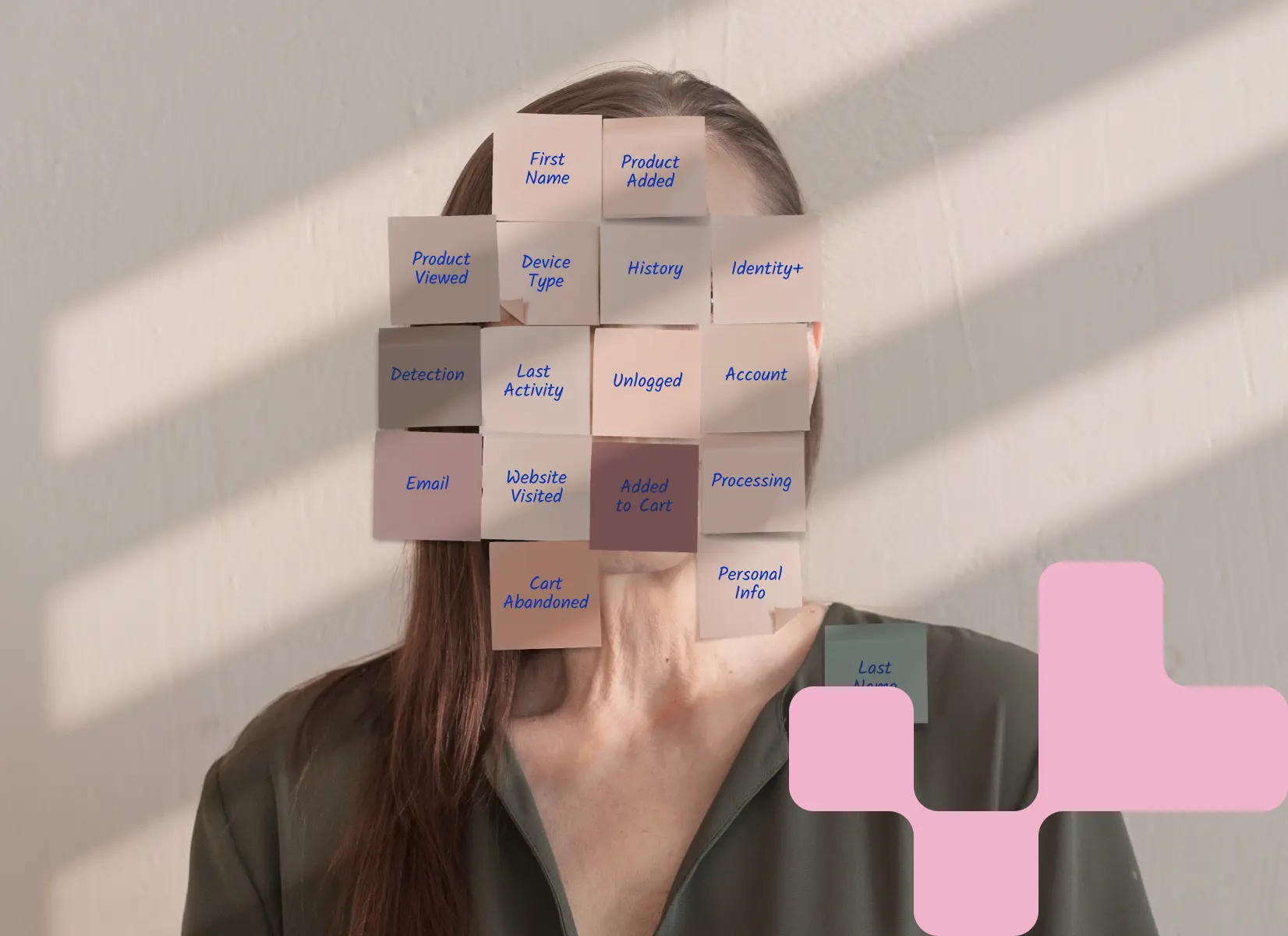

Unlock true customer 360

Seamlessly unify and activate customer data from your cloud data warehouse and beyond.

Power 1:1 personalization

Deliver hyper-personalized customer experiences to forge deeper connections and transform casual customers into loyal advocates.

Boost your bottom line

Discover the industry's latest tips, tricks, and trends to elevate your customer marketing strategies.

No items matching the selected filters

.webp)

.webp)

.png)

.png)